Welcome to On Statistics

-

Dunnett’s Test: Navigating the Labyrinth of Multiple Comparisons

In the realm of statistical analysis, particularly when comparing multiple groups to a control, multiple comparisons can pose a significant challenge. Conducting numerous individual hypothesis tests increases the risk of Type I error, where we erroneously reject the null hypothesis (i.e., falsely concluding a difference exists) simply due to chance. To mitigate this inflated risk,…

-

Multicollinearity in Regression: A Thorny Issue Unveiled

In the realm of regression analysis, where we seek to understand the relationships between variables, multicollinearity emerges as a critical yet often perplexing obstacle. It signifies a situation where two or more independent variables, the very foundation of our predictions, exhibit a strong linear dependence on each other. This inherent correlation, while seemingly harmless at…

-

Unveiling the Uncertainty: Understanding the Standard Error of the Regression

In the captivating realm of regression analysis, we delve into the intricate relationships between variables. While understanding the slope coefficients and their significance is crucial, another vital concept emerges: the standard error of the regression (S). This enigmatic statistic serves as a window into the uncertainty associated with the predicted values generated by our regression…

-

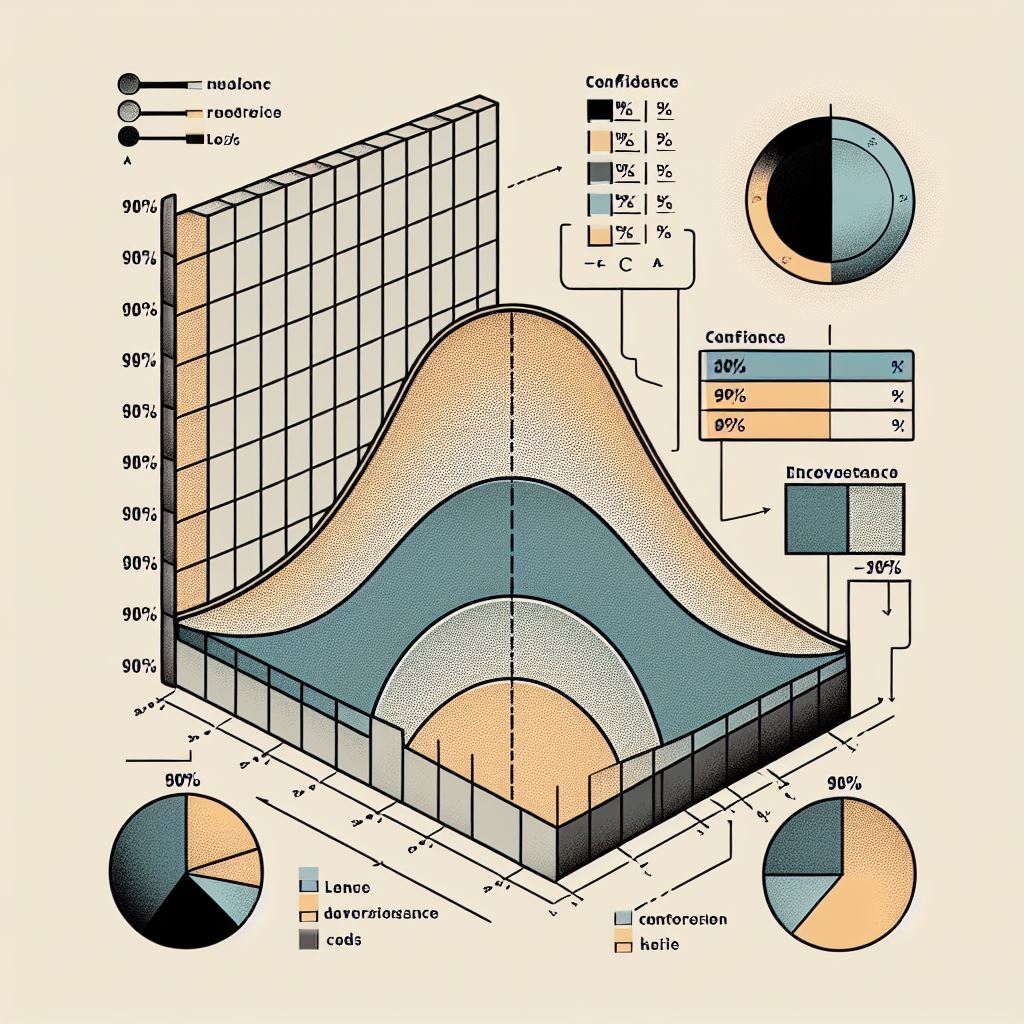

What are Confidence Intervals?

Confidence intervals (CIs) provide a range of values within which the true population parameter is likely to lie with a specified level of confidence. Imagine a dartboard where you’re aiming to hit the bullseye (true population parameter). Throwing a single dart (point estimate) might land somewhere on the board, but it doesn’t guarantee hitting the…

-

Exploring Latin Hypercube Sampling: A Powerful Tool for Efficient Uncertainty Quantification

In the realm of science and engineering, understanding how uncertainties in various factors can influence a system’s behavior is crucial. This is where Latin Hypercube Sampling (LHS) emerges as a powerful tool for efficiently analyzing the impact of these uncertainties. What is Latin Hypercube Sampling? Latin Hypercube Sampling is a sophisticated probability sampling technique used…